Readers Prefer Outputs of AI Trained on Copyrighted Books over Expert Human Writers

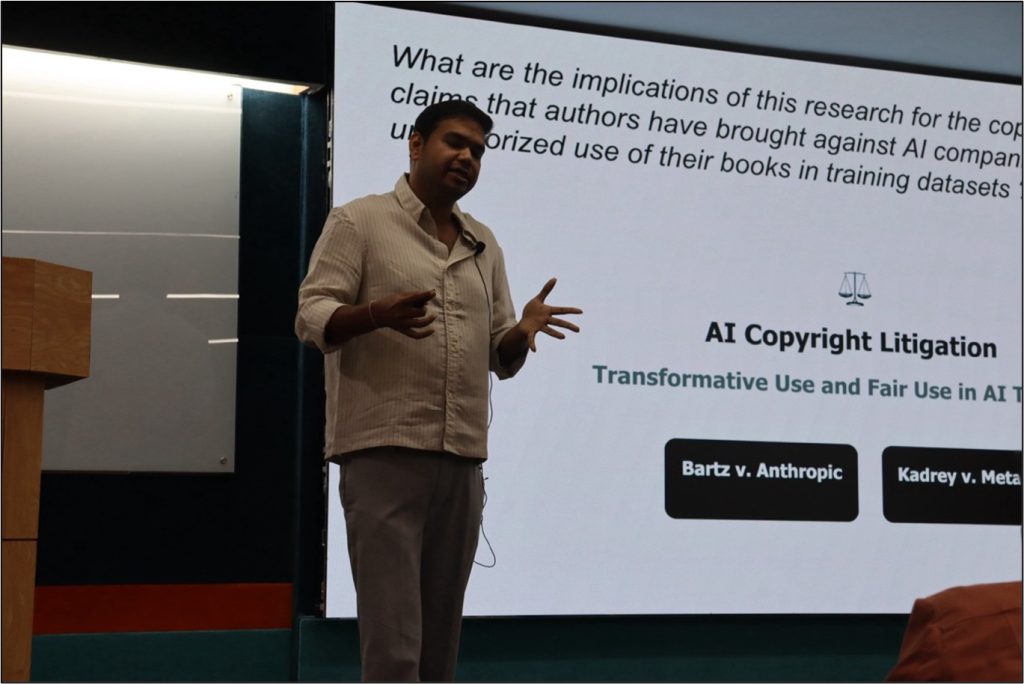

Speaker: Tuhin Chakrabarty, Stony Brook University

Date: 15 December 2025

In an age where artificial intelligence (AI) seems to play a critical role in many sectors, with apprehensions of AI taking over human roles, will readers prefer books written using AI over human writers? What does it mean to preserve copyright when tech companies are using these copyrighted books to train models? Is training models on copyrighted books fair use? These were some of the questions addressed by Tuhin Chakrabarty in his talk.

Based on preliminary data available from Publishers Weekly, adult fiction and non-fiction books accounted for $6.14 billion in 2024. According to a paper by Aaron Chatterji et al on ‘How People use ChatGPT’, one of the biggest uses of ChatGPT was in writing (28.1%) – all forms of writing (technical, creative). The US Bureau of Labor Statistics indicates that writers and authors constitute the majority of the writer profession in the US, who are vulnerable –whether they will be replaced by AI or make it.

Artificial intelligence lacks a distinctive style/voice, which is crucial for good writing. To bypass this, people are instructing ChatGPT to engage in style mimicry, emulating the choices made by a specific writer, which produces a highly derivative story.

What harm does AI cause to the future pool of writers? To address this question, Tuhin Chakrabarty and his team recruited 28 MFA (Master of Fine Arts)-trained expert writers from top programmes in the US such as the Iowa Writers Workshop, Columbia, NYU, UMich, BU and pitted them against large language models (LLMs). The researchers were interested in understanding the extent to which AI and humans emulate the same author’s style. They evaluated this on the basis of writing quality and stylistic fidelity. The preference judgements were collected from both experts and lay readers.

In step 1, 50 authors with acclaimed literary style and voice were selected. In step 2, the MFA-trained experts and LLMs were compared with in-context prompting (on 50 authors). In step 3, the in-context prompting was replaced with fine-tuning (30 authors). A total of 3840 pairwise evaluations were done. In the in-context setup, for stylistic fidelity, the expert readers favoured human writing, while the lay persons preferred AI but not overwhelmingly. When ChatGPT was fine-tuned on an author’s entire lifetime of work, both experts and lay readers had a huge preference for AI writing. For writing quality, the in-context expert results showed high preference for human writing, but the lay readers already preferred AI writing. For the fine-tuned writing, both experts and lay readers highly preferred AI writing.

AI writing was easily detectable in the in-context prompting scenario, but when the models were fine-tuned on an author’s entire work, the AI stylistic ticks disappeared. All the fine-tuned AI output was not attributed as AI written.

How does AI detectability interplay with writing quality preferences? If the text is AI-detectable, then the writing quality preference goes down. The researchers did a mediation analysis of what stylometric features are correlated with AI detector scores and human preferences; they considered cliché density, reading difficulty, and adjectives count. They found that the more clichés there are in the text, the more likely that it will be AI detected and humans would prefer it less.

Considering the cost of producing 100,000 words of raw text in the case of expert writer versus fine-tuned AI model, it was found that this was $25,000 in the case of an expert writer, and much less in the case of a fine-tuned AI model (e.g. $136.50 for author P Everett and $53 for author L Davis). Will readers be able to discern whether the text is AI-generated or written by their favourite human writer, when it is possible to create high-quality books with fine-tuned LLMs?

The speaker’s current work in progress looks at self-published books. Out of 14,000 books in the fiction genre, 2625 books were AI generated, and out of these, more than 600 books were completely AI generated. Another work by the speaker shows that 70% of an entire novel is encoded into pretraining data and it can be regurgitated. Once you have finetuned the model, you have no control and it can regurgitate almost 70–80% of the books – definitely not qualifying for fair use in copyright law.

What are the implications of this research for the copyright infringement claims that authors have brought against AI companies alleging unauthorised use of their books in training datasets? Tuhin Chakrabarty spoke specifically about two copyright litigations, namely, Bartz versus Anthropic and Kadrey versus Meta.

The protocol for fair use in the US includes four factors: (i) purpose and character (commercial vs educational use), (ii) nature of work (published creative works), (3) amount used (portion of work copied), and (iv) market effect (impact of value on original). The results of the speaker’s research (i) demonstrate that unauthorised use of copyrighted data poses threats to creative labour markets; (ii) AI training cannot be treated as monolithic; (iii) fine-tuned models produce undetectable, high-quality outputs competing directly with human creators, potentially devaluing original work and undermining economic incentives that copyright law protects; and (iv) for lay people, it may not even matter.

I joined the BTech programme in Mathematics and Computing at the Indian Institute of Science in 2022. My faculty advisor, Professor Viraj Kumar, has guided my academic journey, and I had the opportunity to work under his mentorship during the summer of 2024.

I joined the BTech programme in Mathematics and Computing at the Indian Institute of Science in 2022. My faculty advisor, Professor Viraj Kumar, has guided my academic journey, and I had the opportunity to work under his mentorship during the summer of 2024.

I joined BTech at IISc in 2024.

I joined BTech at IISc in 2024.

I joined the BTech programme in 2024. My faculty advisor is Professor Viraj Kumar. I am interested in computer science and would like to do research in computer science in the future.

I joined the BTech programme in 2024. My faculty advisor is Professor Viraj Kumar. I am interested in computer science and would like to do research in computer science in the future. Anmol Gill pursues the MTech (Artificial Intelligence) programme at IISc, in the Robert Bosch Centre for Cyber-Physical Systems (

Anmol Gill pursues the MTech (Artificial Intelligence) programme at IISc, in the Robert Bosch Centre for Cyber-Physical Systems (

I recently completed my BTech in August 2024 from the Vellore Institute of Technology, Chennai, with a major in Electronics and Communication Engineering. From May 2024, I am a research intern, supported by the Kotak-IISc AI/ML Centre, in the Human-Interactive Robotics (HiRo) Lab at the Robert Bosch Centre for Cyber-Physical Systems, IISc. I am working under the guidance of Professor Ravi Prakash. My essential area of interest lies in robot learning, which lies under the general area of AI and robotics.

I recently completed my BTech in August 2024 from the Vellore Institute of Technology, Chennai, with a major in Electronics and Communication Engineering. From May 2024, I am a research intern, supported by the Kotak-IISc AI/ML Centre, in the Human-Interactive Robotics (HiRo) Lab at the Robert Bosch Centre for Cyber-Physical Systems, IISc. I am working under the guidance of Professor Ravi Prakash. My essential area of interest lies in robot learning, which lies under the general area of AI and robotics.